Network and web applications generate metrics, which we usually just shovel into a library without thinking much about their true meaning or performance cost. This week I decided to read through the existing Go libraries, got halfway through writing my own, dropped it, and finally wrote this guide aimed at last-week-me.

If you know all about Exponentially Weighted Moving Averages, this might be a bit boring. Also, you might find me spectacularly wrong. I'm definitely not a data scientist. In case let me know.

The age of the TSDB

The first thing to understand is that not all metrics play the same role. In forgotten times, metrics had a really tough job to do and really strict resources: they had to give you an idea of how a value evolved in time showing you a single or a few values, while consuming a small bounded amount of memory.

Think for example to the Unix load averages, those three numbers shown in top to give you an idea of how loaded the machine has been in the last minutes. 3.9 3.1 1.2 would tell you, for example, that the machine has been doing 400% for the last 3 to 6 minutes--not 1, not 20. That's not bad and thanks to some fancy math (EWMA, we'll talk about it later) they only occupy as much space as an integer.

However, do you know what's great at showing you how a value changed? A graph. Nothing beats showing a graph of the exact actual load of the machine over time.

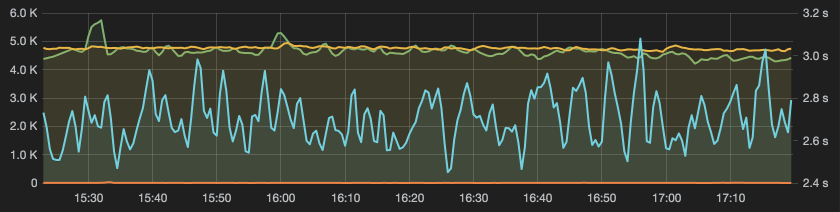

Obviously, that requires taking samples frequently, storing and analyzing them. This was not always feasible, but chances are that you are already doing it in your application: it's the job of the Time Series Database (TSDB), like OpenTSDB, InfluxDB, Graphite, Prometheus, etc. What they do is efficiently record many periodic samples of your metric, and serve it to some graphing engine like Grafana.

Once you have that, you probably don't need complex suggestive statistics to communicate how a value changed, because you stored a complete history, and can just visualize it.

However, you must be careful to store and visualize raw data, or you'll have to again keep in mind how that statistic represents the underlying values. (Think for example a graph of the CPU load vs. a graph of the 5-min load average.)

Also, try guessing how much memory it would occupy keeping all requests timings of the last 5 seconds to nanosecond precision at 5000qps... it's 200kB. You have 200kB. And that kills the need to accurately choose which representative sample to keep.

Metrics types

With that in mind let's take a look at the different types of values you might want to monitor, and what you can use to do it.

We'll refer to the terminology of the Metrics Java library, a port of which is the most popular Go metrics library.

An arbitrary value

Starting off easy. This is what they call Gauge. It's nothing else than an absolute value that doesn't need interpretation and can vary arbitrarily.

Examples: queue size, CPU load.

Depending on what the value represents, there might be appropriate statistics to represent it (like the Unix load averages), but when you are using a TSDB and a graph, you really don't need anything else than the raw samples to show on the Y axis.

Store it in a int64 with sync/atomic or use a expvar.Int (which is the same thing if you look into it), pass it to the TSDB, slap it in a graph and you're good.

A rate

Then there are discrete events of which you want to know "how often is this happening?" The libraries offer to handle it with a Meter or a Counter. Let's see why a Counter is enough.

Examples: requests per second, exception rates.

The rawest form of the data--the one to pass to your TSDB--here is a simple counter, incremented every time the event happens. Obviously, you can't plot that directly on the Y axis, as you would only see a monotonically increasing line, and the rate would not be easy to tell. Thankfully, most graphing engine have a box to tick (often called "Counter" or "Rate") to plot the rate at which the value increases instead of the value itself. So if for example the samples were 1.0s: 10, 2.0s: 10, 3.0s: 15, 4.0s: 25, 5.0s: 25 it would plot 1.0s: 10, 2.0s: 0, 3.0s: 5, 4.0s: 10, 5.0s: 0. Exactly what we needed to know!

If instead you were to use a Meter, some other statistics would be kept: a 1,5,15-min EWMA (Rate1/5/15) and a mean (RateMean). The Exponentially Weighted Moving Average is the one of the Unix load averages. It is kept by resetting the counter every 5 seconds (hardcoded in go-metrics), dividing it by 5s to get an "instant" rate, taking the difference from the latest EWMA, multiplying it by a reducing factor, and then adding it back to the latest EWMA. The reducing factor is tuned so that for example the 1-minute EWMA will add up 63% of the rate from the last 60s, plus 37% of the rate since start to 60s ago. The code might be clearer. A graph of a EWMA is slow to change, and probably not what you want unless you really understand what a EWMA shows.

The mean rate instead is as simple as it is useless. It's just total events divided by seconds since start. Why you would want a graph of that escapes me.

Finally, if your system really can't figure out the rate from a counter, you can do it in your application: if for example you pull samples every 5 seconds, reset the counter every time you do, and store the counter divided by 5.

See also: Meters docs.

A values distribution

But not all events are discrete, some have values of which we want to keep track. Like, how long it took to serve each HTTP request. This is what the library addresses with a Histogram.

Examples: response timings, database latency.

The fundamental concept is that you can't easily visualize all the individual measures, so you have to compute some numeric distribution statistics. These are pretty well known and understood, like the average, the percentiles ("X% of the requests took less than"), the median, the minimum and the maximum.

Obviously it's unfeasible and not that meaningful to compute these statistics over all the values since the start of the service. However, it's reasonably easy to keep a buffer of the last N values, or of the values from the last N seconds, and to store the statistics computed over those. The Java library calls these respectfully Sliding Window Reservoir and Sliding Time Window Reservoir. (I was sad to find out that go-metrics doesn't offer them at all--see below for how to build your own with a UniformSample.)

IMHO, that is all you really need. In a graph with one point per minute, you only want each point to represent the average (or percentile, or variance, etc.) of the last minute or so of values, because you have the rest of the graph to tell you about the previous ones. Again, my argument is that datapoints in your TSDB should express "instant" measurements, and leave history to the graph. (Where "instant" means representative of what happened since the last datapoint.)

The only thing to be careful about is that if exporting doesn't happen often enough (at least every N requests, or every N seconds), events will be dropped and spikes that happened there would become completely invisible. I wouldn't worry that much about the unbounded buffer size instead, as these days memory abounds, and even 5 seconds of int64 at 100kqps will fit in 5MB.

The fixed size buffer can be implemented easily and efficiently as a circular buffer, but it has the downside of changing behavior based on the rate of the events. The sliding time window buffer is more complex and heavy, but it's more stable.

Other samples you might encounter

A exponential decaying sample is more complex, but the core idea is that it gives more priority to recent events, while keeping a memory of some older ones. Like a EWMA, it's in the business of letting past values influence the current value, which is not what you want when you have a TSDB and graphs. I'm not the only one to think it has issues as a live metric.

A uniform sample is the equivalent of the mean rate above: it keeps a fixed-size sample that is equally representative of the entire values history. If you were to keep all the values and then take a random sample, the result would be the same. The code is super-simple. Like the mean rate, it's definitely not live monitoring if used as-is.

However, a few big enough uniform samples can be used to build a fixed time window: every period (or every sample) just rotate the in-use sample, resetting the oldest, like gokit and github.com/codahale/metrics.Histogram do.

See also: Histogram documentation--the Reservoir are the samples, and they are explained quite well there.

Delegating the math

If performance allows, an alternative approach is to delegate the distribution analysis to an external system. You send all raw measurements (like, one for each HTTP request) to a different system, and that system does the statistics and exports them to the TSDB. Not much changes as you still need to understand what that system is doing.

For example this is the job of statsd: it buffers all the datapoints you send it and then computes the statistics every time it's "flushed" (i.e. a measurement is exported to the TSDB). This behavior is similar to the fixed time window you can build out of rolling UniformSamples.

Conclusion

I hope this piece helped you better understand those graphs that fill the TV screens in the office, and realize that once you have a TSDB and sync/atomic, all you really need from a library (and a Mutex) is a circular buffer or a sliding time window.

Sadly and surprisingly, go-metrics only implements the nuanced ExpDecaySample and the useless as-is UniformSample. github.com/codahale/metrics.Histogram is essentially a nice 5 minutes sliding time window, and you can build your own by emulating what it does.

Anyway, you might want to follow me on Twitter.